In this page, we are going to implement the Word2Vec word embedding technique used for making word vectors with Python Gensim library. Also, we are going to review other most commonly used word embedding techniques.

Word Embedding Approaches

One of the reasons that the process of natural language is a difficult issue to fix is the fact that computers can only understand numbers. We have to represent words in a numeric format which is understandable by the computers. Word embedding indicate the numeric representations of the words.

Some word embedding approaches currently exist are:

- Word2Vec

- Bag of Words

- TF-IDF Scheme

Word2Vec

Word2Vec approach utilizes deep learning and neural networks-based techniques to convert words into corresponding vectors so that the semantically similar vectors are close to each other in N-dimensional space, where N refers to the dimensions of the vector. Word2Vec returns several astonishing results. The ability of Word2Vec to maintain semantic relation is reflected by a classic instance where if you have a vector for the word “Queen” and you delete the vector represented by the word “Woman” from the “Queen” and add “Men” to it, you get a vector which is close to the “King” vector. This relation is commonly represented as:

Queen – Woman + Men = King

Word2Vec model presents in two flavors: Skip Gram Model and CBOW (Continuous Bag of Words Model).

In the Skip Gram model, the context words are predicted by utilizing the base word. For example, given a sentence “I like to sing in the rain”, the skip gram model is going to “like” and “sing” given the word “to” as input.

On the contrary, the CBOW (Continuous Bag of Words Model) model is going to predict “to”, if the context words “like” and “sing” are fed as input to the model. The model studies those relationships by using deep neural networks.

Pros and Cons of Word2Vec

Now, we are going to explain about Pros and Cons of Word2Vec. Apparently, Word2Vec has some advantages over IF-IDF scheme and bag of words. The Word2Vec keeps the semantic meaning of different words in a document. The context information is not lost. Another nice advantage of Word2Vec approach is that the size of the embedding vector is extremely small. Each dimension in the embedding vector contains information regarding one aspect of the word. We do not require huge sparse vectors, unlike TF-IDF and the bag of words approaches.

For note: The mathematical details of how Word2Vec works involve an explanation of neural networks and softmax probability, which is beyond the scope of this page. If you want to understand the mathematical grounds of Word2Vec, you have to read other articles on our site.

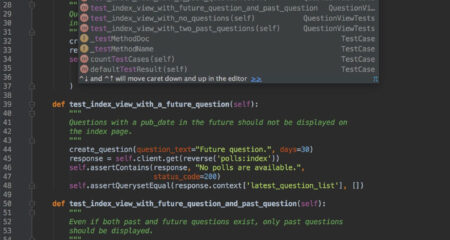

Word2Vec in Python with Gensim Library

In this section, we are going to implement Word2Vec model with the help of Python’s Gensim library. Please follow these steps below:

Creating Corpus

We have already discussed earlier that in order to make a Word2Vec model, we require a corpus. In the real-life applications, Word2Vec models are made by using lots of documents. For example, Google’s Word2Vec model is trained by using three million words and phrases. But, for the sake of simplicity, we are going to make a Word2Vec model by using a Single Wikipedia article. Our model will not be as nice as Google’s. Before we were able to summarize Wikipedia articles, we require to fetch them. To do so we are going to use a couple of libraries. The first library which we need to download is the Beautiful Soup library. For your information, it is a really useful Python utility for web scraping. Please execute the command below at command prompt to download the Beautiful Soup utility.

$ pip install beautifulsoup4

Another important library which we require to parse XML and HTML is the lxml library. Now, you are able to execute the command below at command prompt to download lxml:

$ pip install lxml

The article we will scrape is the Wikipedia article on Artificial Intelligence. Let us write a Python Script to scrape the article from Wikipedia.

import bs4 as bs

import urllib.request

import re

import nltk

scrapped_data = urllib.request.urlopen('https://en.wikipedia.org/wiki/Artificial_intelligence')

article = scrapped_data .read()

parsed_article = bs.BeautifulSoup(article,'lxml')

paragraphs = parsed_article.find_all('p')

article_text = ""for p in paragraphs:

article_text += p.text

In the script above, first we download the Wikipedia article by using the urlopen way of the request class of the urllib library. Then, we read the article content and parse it by using an object of the Beautiful Soup class. We utilize the find_all function of the Beautiful Soup object to fetch all the contents from the paragraph tags of the article.

Bag of Words

The bag of words approach is a simplest word embedding approaches. The following are steps to generate word embeddings by using the bag of words approach.

We are going to see the word embeddings generated by the bag of words approach with the help of an instance. Suppose you have a corpus with three sentences.

- S1 = She likes rain

- S2 = rain rain goes away

- S3 = She is away

To convert the example sentences above into their corresponding word embedding representations by using the bag of words approach, you have to do the steps below:

- Make a dictionary of unique words from the corpus. In the above corpus, we have following unique words: [She, like, rain, goes, away, is]

- Please parse the sentence. For each word in the sentence, you are able to add 1 in place of the word in the dictionary and add zero for all the other words which do not exist in the dictionary.

TF-IDF Scheme

The fact of TF-IDF scheme is that words having a high frequency of occurrence in one document, and less frequency of occurrence in all the other documents, are more crucial for classification. Apparently, TF-IDF is a product of two values: Term Frequency (TF) and Inverse Document Frequency (IDF). For instance, if you view at the sentence S1 from the previous section, “She likes rain,” every word in the sentence occurs once.

AUTHOR BIO

On my daily job, I am a software engineer, programmer & computer technician. My passion is assembling PC hardware, studying Operating System and all things related to computers technology. I also love to make short films for YouTube as a producer. More at about me…

Leave a Reply